001Strategy

The 6-Week Roadmap to Enterprise AI Visibility: A Step-by-Step GEO Playbook

The 6-Week Roadmap to Enterprise AI Visibility: A Step-by-Step GEO Playbook

Enterprise teams keep asking us the same question: "How long until we see our product recommended in ChatGPT, Gemini, and Claude?"

The honest answer is that Generative Engine Optimization (GEO) is not a single push of a button. It is a structured process — measure, fix, re-measure — that compounds quickly once the foundation is in place. In our experience working with B2B and consumer enterprise customers, six weeks is enough time to go from zero baseline to the first measurable lift in Share of Model, and from week 7 onwards the platform is fully warmed up and the data becomes a reliable input for ongoing strategy.

A premium example of technical product visibility: the Infineon PSoC Edge.

A premium example of technical product visibility: the Infineon PSoC Edge.

This article walks through that roadmap week by week. It also clarifies one thing that often gets lost in sales conversations: BobUpAI is built to be used self-service. The BobUpAI team is available as an accelerator, but every step below can be executed by your in-house marketing or product team without us in the room.

Why a Roadmap (and Not a One-Time Audit)?

A one-time AI visibility audit tells you where you stand today. It does not change anything. AI models update their answers based on what they re-crawl, what new third-party content appears, and how their underlying training and retrieval signals evolve. To move the metric, you need a cycle: baseline → fix → verify → expand.

Six weeks is the minimum sensible window because:

- LLM answers stabilize over rolling 5-7 day windows. A single day's snapshot is noisy.

- Newly published or updated content typically takes 7-14 days to be reflected in answer engines that use Retrieval-Augmented Generation (RAG).

- Optimizing one prompt deeply teaches you more than optimizing 50 prompts shallowly. You need time to do the deep work.

The Three Layers of GEO Optimization

Before walking through the weekly plan, it helps to name the three optimization layers that the roadmap moves in parallel. Most enterprise teams treat GEO as a content exercise, but a meaningful Share of Model lift comes from compounding all three at once.

Layer 1 — On-Page Crawlability and Structure

The foundation. Make every product, pricing, and FAQ page parsable to GPTBot, Google-Extended, ClaudeBot, and PerplexityBot. This is bot-access (robots.txt, edge rules), structured data (JSON-LD, FAQ Schema, Product Schema), and clear "Problem → Solution → Proof" framing. Skip this and every later optimization is muted, because the AI cannot reliably reach or interpret what you publish.

Layer 2 — Authority Platform Placement

LLMs disproportionately cite a small set of high-authority platforms — LinkedIn long-form posts, Reddit threads, industry-specific forums, and curated listicles on category-leader publications. Closing a Citation Gap often means publishing a single well-targeted Reddit answer or LinkedIn post on the platform the model already trusts, not endlessly tuning your own marketing site.

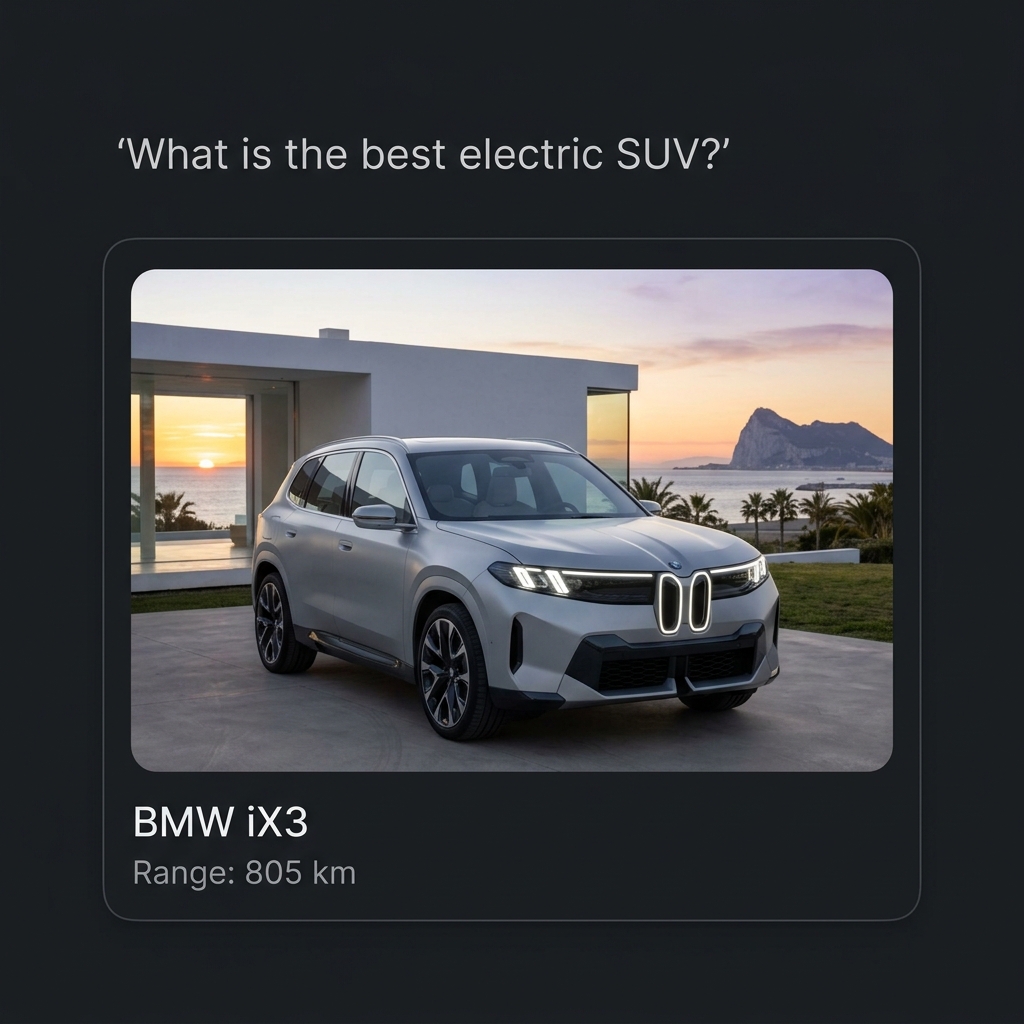

Layer 3 — Multimedia and Video

Video transcripts are now a primary training and retrieval signal for Gemini and ChatGPT. A YouTube explainer with a clean transcript, supporting timestamps, and clear product framing acts as a high-trust source the model will cite back when it answers "What is the best X for Y?". Multimedia is the single most under-invested GEO layer at most enterprises.

Each week below moves all three layers forward in coordination, not sequentially.

The 6-Week Roadmap at a Glance

| Week | Focus | Outcome |

|---|---|---|

| 1 | Prompt alignment & baseline measurement | Locked-in prompt set; status-quo Share of Model across ChatGPT, Gemini, Claude |

| 2 | Website readiness & technical foundation | Bots can access and parse your product pages; optional GSC integration live |

| 3 | Focus-prompt selection & first content fixes | 3-5 high-priority prompts chosen; first round of optimized content shipped |

| 4 | Re-measurement & content expansion | First lift visible on optimized prompts; expansion to adjacent prompts |

| 5 | Competitor displacement & sentiment tuning | Citation gaps closed against named competitors; sentiment shifts measured |

| 6 | Iteration, evaluation & playbook codification | Consistent gains across the priority prompt set; repeatable playbook documented |

| 7+ | Steady-state operation | Tool fully warmed up; data is decision-grade; cadence shifts to weekly optimization |

Before / After: What "moving the metric" actually looks like

Prompt: "Best B2B observability platform for fintech?"

Week 1 (baseline):

- ChatGPT cites: Datadog · New Relic · Splunk · Honeycomb · Grafana

- Your product: not mentioned.

Week 6 (post-roadmap):

- ChatGPT cites: Datadog · YourCo · New Relic · Honeycomb · Grafana

- Sentiment moved from absent → "specifically recommended for fintech compliance use cases."

Same prompt, same model. The difference is six weeks of structured Layer 1 + Layer 2 + Layer 3 work — including a single Reddit thread the model now treats as canonical.

Week 1 — Align on Prompts and Establish the Baseline

Everything downstream depends on measuring the right prompts. Optimizing for prompts your buyers don't actually use is the most common GEO failure mode.

What happens in Week 1:

- Prompt generation — three modes. BobUpAI supports three ways to build the prompt set, and the strongest enterprise rollouts use a deliberate mix:

- Smart Mode — the platform generates 100+ prompt candidates from your product category, value proposition, USPs, and competitive set. Best when entering a new GEO program and you need fast coverage.

- Expert Mode — your team enters prompts directly: questions sales hears on calls, transactional buyer prompts ("What is the best vacuum robot for…"), and queries you already track in traditional SEO.

- Hybrid Mode — the recommended path. Use Smart Mode to generate breadth, then layer Expert Mode on top to inject institutional knowledge an LLM cannot guess. This is where the strongest prompt sets come from.

- Lock the set. A locked prompt set of 50-150 prompts is typical for an enterprise rollout. Bigger sets dilute the signal in the early weeks.

- Run the baseline. BobUpAI runs the full prompt set across ChatGPT, Gemini, Claude (and Perplexity if relevant) to establish the status quo.

What you have at end of week 1: A locked prompt set and a status-quo Share of Model per prompt per engine. Importantly, you do not start optimizing yet. You let the baseline run for the full week so you have a stable reference point that future weeks measure against.

Week 2 — Make Sure the Website is Actually Visible to AI Bots

A surprising share of enterprise websites are quietly blocking the very crawlers that feed answer engines. Before you write a single new piece of content, verify the foundation.

What happens in Week 2 (this is Layer 1 work — the on-page foundation everything else compounds on):

- Bot access check. BobUpAI verifies whether GPTBot, Google-Extended, ClaudeBot, PerplexityBot, and other relevant agents can actually crawl your product pages.

robots.txtrules and edge-network blocks are the usual culprits. - Product-page structure check. Are your product pages parsable? Clear headings, structured data (Schema.org / JSON-LD, FAQ Schema), feature tables, and "Problem/Solution" framing dramatically increase how confidently an LLM cites you. (Schema generation directly inside the platform is on the near-term roadmap; today BobUpAI flags the gaps and your team or our content service ships the markup.)

- Optional: connect Google Search Console. This unlocks two things — instant indexing pings when you publish optimized content, and access to your real-world query data so the platform's recommendations are tuned to how your buyers actually search.

- Optional: connect your CMS. Today, one-click publish is live for GitHub-backed sites (Next.js blogs, GitHub Pages, repo-driven Webflow exports). Native Webflow API and WordPress connectors are on the near-term roadmap — for those CMSs the platform produces a copy-ready draft your team pastes into the editor.

What you have at end of week 2: A website that is technically ready to receive optimization work. Skipping this step means future content lifts will be muted because the AI can't reliably reach or parse what you publish.

Weeks 3-6 — Select Focus Prompts, Optimize Content, Evaluate, Finetune

This is where the work compounds. The pattern is the same every week: pick the Low Hanging Fruits, fix the underlying Citation Gap across all three layers, wait for the engines to refresh, close the loop by re-measuring against the new URLs, then move on.

Week 3 — First focus round (Low Hanging Fruits). Select 3-5 high-priority prompts where you are absent or losing to a specific competitor. The strongest first picks are almost-visible prompts — ones where you are mentioned in passing but not in the top recommendation, or where a single competitor displaces you on prompts your product objectively fits better. These are the Low Hanging Fruits: highest impact-per-fix in the first week of optimization. Selection can come from two directions:

- BobUpAI Team suggestions. If you have an enterprise plan with team support, our team curates the priority list based on what we see across the industry.

- Self-service tool suggestions. The platform ranks prompts by impact (search volume × current visibility gap × competitive intensity) so your team can pick the priority list independently.

For each focus prompt, BobUpAI generates an Action Plan that spans all three optimization layers:

- Layer 1 (On-Page): which page to update, which Schema fields to add, which sections to restructure, and a draft of the optimized copy itself.

- Layer 2 (Authority): which third-party platforms (LinkedIn, Reddit, industry forums, listicle publishers) to target, with a copy-ready post draft tuned to the platform's tone.

- Layer 3 (Multimedia): which YouTube angle to record, with a transcript skeleton optimized for retrieval and the timestamp structure that performs.

Week 4 — Closed-Loop Tracking and expansion. Re-run the affected prompts and register the new URLs (your blog post, the LinkedIn article, the YouTube link) inside BobUpAI so the platform can attribute the lift directly to the specific assets you shipped. This is the Closed-Loop Tracking step — without it you only know the metric moved; with it you know which asset moved it. Expect first measurable lifts on the prompts you tackled in week 3 (a move from 0% → 40-60% Share of Model on a single prompt is realistic). Add the next 5-10 prompts to the active queue.

Week 5 — Competitor displacement and sentiment. Now that you are appearing, optimize how you are described. Identify the citation sources driving competitor wins — almost always a mix of Layer 2 placements (a Reddit thread, a top-3 listicle, a comparison post on a category-leader site) — and produce content tuned to displace them. Watch the sentiment metric: moving from "neutral" to "highly recommended" often matters more than raw mention count.

Week 6 — Iterate and codify. By week 6 you should have consistent gains across the priority prompt set. The work shifts from "what do we fix" to "how do we make this repeatable." Document the playbook so the cadence becomes ongoing operations rather than a launch project.

Week 7 and Beyond — Steady-State Operation

By week 7, two things change.

First, the platform itself is fully warmed up: trend lines have enough data points to be statistically meaningful, cause-of-change attribution becomes reliable, and competitor benchmarks stabilize. Decisions made on week 7+ data are decision-grade rather than directional.

Second, your team's cadence shifts. The intense weekly cycle of weeks 3-6 settles into a sustainable rhythm — typically a weekly review of new prompt opportunities, monthly deeper competitive intelligence sweeps, and quarterly strategy resets when your product or category shifts.

This is the steady state most enterprise customers operate in. The roadmap isn't something you run once; it's the on-ramp to ongoing GEO operations.

Who Does What: BobUpAI vs. Your Team

One of the most common questions in enterprise procurement is: "What exactly will BobUpAI do, and what is on us?" Here is the honest split.

| Activity | BobUpAI | Your Team |

|---|---|---|

| Prompt generation & coverage | Auto-suggests 100+ relevant prompts; runs them across all major LLMs | Reviews the set; adds prompts your team already knows matter |

| Baseline measurement | Runs the full prompt set; produces the Share of Model dashboard | Reviews the dashboard with stakeholders |

| Website bot-access audit | Detects blocks and structural issues; reports the fixes | Implements the technical fixes (robots.txt, Schema, edge config) |

| Action Plan generation | Produces per-prompt Action Plans with concrete recommendations | Decides which Action Plans to prioritize this week |

| Content drafting (Layer 1) | Generates optimized drafts validated against the target prompts | Reviews, edits to match brand voice, approves |

| Authority & multimedia drafts (Layers 2 & 3) | Generates LinkedIn / Reddit posts and YouTube transcript outlines aligned to your priority prompts | Posts under your team's accounts; records and uploads video |

| Publishing | 1-click publish for GitHub-backed sites today; copy-ready drafts for Webflow and WordPress (native connectors on the roadmap) | Owns the final approval and scheduling |

| Closed-Loop Tracking | Re-measures Share of Model after you register the new URLs and attributes lift to the specific asset | Adds the URLs of every shipped asset (blog, LinkedIn, YouTube) into the platform |

| Competitor citation mapping | Surfaces which third-party sources drive competitor wins | Decides outreach strategy (PR, reviews, listicle inclusion) |

| Strategy & prioritization | Provides data and ranked recommendations | Owns the strategic decisions |

The pattern is consistent: BobUpAI does the measurement, the heavy data work, and the first-draft generation. Your team owns judgment, brand voice, and final approval.

You Don't Need the BobUpAI Team to Run This

This is worth saying clearly: the entire 6-week roadmap above can be executed self-service. Every step — prompt generation, baseline measurement, bot-access audit, Action Plan generation, content drafting, publishing, re-measurement — is built into the platform and designed to be run by a Product Marketing Manager, growth lead, or content strategist without any involvement from us.

When does it make sense to add team support? Three cases:

- Speed to first result matters. Our team can compress the week-1 prompt alignment and week-3 focus selection into days rather than a week each.

- Highly regulated or technical category. Industries like medical, legal, or industrial B2B benefit from a category specialist on the call when sentiment and accuracy carry compliance risk.

- Multi-product or multi-region rollout. Coordinating the same playbook across many product lines or geographies benefits from an outside operator who has run it before.

For a single product line in a non-regulated category, the self-service path is the most common — and it is the path the platform is designed for.

💡 Key Takeaways

- GEO is three layers, not just content. On-Page (crawlability + Schema), Authority Platforms (LinkedIn, Reddit, forums), and Multimedia (YouTube transcripts) compound together — optimizing any single layer in isolation underperforms.

- Six weeks is the minimum sensible window to go from zero baseline to measurable Share of Model lift, because LLM answers need stable measurement windows and content needs time to be re-crawled.

- Week 1 is for measurement, not optimization. Build the prompt set in Smart / Expert / Hybrid mode, lock it, run the baseline, resist the urge to start fixing things before you know where you stand.

- Week 2 is the unglamorous but critical Layer 1 foundation. A blocked GPTBot or unparseable product page will mute every content lift you ship later.

- Weeks 3-6 are where compounding starts — and "Low Hanging Fruits" (almost-visible prompts) are where to start. Close the loop on every fix by registering the new URL so the platform can attribute the lift to a specific asset.

- From week 7 the data becomes decision-grade and the operating cadence shifts from project mode to steady-state operations.

- BobUpAI handles measurement, drafting, and Closed-Loop Tracking. Your team owns judgment and approval. Both are designed so the same person can run the platform end-to-end without team support.

Ready to start your 6-week roadmap?

- Run your free baseline scan to see where you stand on day one.

- Walk through the platform tutorial to see exactly how the workflow runs in practice.

Continue reading

More on Strategy.

The Shift to Product-Led AI: Why Your Brand Name Won’t Save You in the LLM Era

In the AI era, product visibility will win the purchase. Learn why brand awareness isn't enough and how to optimize for 'Expert Personal Shopper' AI agents.

How to Be Found for the Problem Your Product Solves... Not a Keyword

The way the internet finds things has fundamentally changed. Learn why AI search is about being 'recommended' as the specific product that solves a problem, not just ranking for a keyword.

What is the best Generative Engine Optimization (GEO) platform for Product Marketing Managers?

The transition from SEO to GEO represents the biggest shift in digital marketing. Find the top GEO tools for product marketers to optimize their go-to-market strategies.

Newsletter — Stay updated

Enjoyed this article?

Subscribe to our newsletter to get more insights like this delivered to your inbox.